Education technology pilot strategy for institutional scale

Most EdTech pilots succeed technically and fail institutionally. A platform hits its uptime targets, educators respond positively, integration flows run without errors — and then the renewal review stalls. The reason is almost always the same: the pilot was designed to validate features, not to survive a procurement committee.

This article is for IT directors, CTOs, and procurement leads at educational institutions and EdTech vendors who are preparing — or already stuck in — the transition from a controlled pilot to multi-campus or district-wide deployment. Next — you will find a structured framework covering governance ownership, regional compliance requirements, integration durability, and funding transition, with direct comparisons between U.S. and European institutional contexts.

An education technology pilot strategy that accounts for renewal criteria from the start reduces friction during expansion reviews. The variables that matter most are not classroom metrics. They are governance continuity, regulatory alignment (FERPA in the U.S., GDPR in the EU), integration capacity at institutional load, and the ability to move from grant-based to operational funding without re-tendering.

What an education technology pilot strategy actually covers

An education technology pilot strategy is a structured framework that limits technical scope during initial deployment while documenting the scaling assumptions, governance continuity, compliance obligations, and funding transition paths that will determine whether expansion is approved.

That definition matters because most pilots document the wrong things. They capture uptime, engagement rates, and feature adoption — metrics that satisfy instructional goals but tell an institutional review committee almost nothing about what happens when the system expands to five campuses or when the grant funding ends.

In U.S. K–12 districts, pilots typically operate within a single school or grade band. In European public systems, the unit is often a municipality or school cluster. In higher education, it may cover one department or academic program. The boundaries differ, but the documentation gap is consistent: pilots rarely specify what governance looks like at scale, who owns configuration after the pilot team disbands, or how data retention policies apply once the cohort changes.

Without that documentation, expansion decisions become reactive rather than planned. Institutions revisit assumptions that should have been resolved before the first classroom went live.

Bluepes EdTech software development services include architecture and governance reviews specifically for teams facing this transition.

Why technically successful pilots still fail renewal reviews

Renewal decisions are not extensions of pilot evaluations. They involve different stakeholders, different criteria, and a different risk calculus.

Institutional review committees — which in U.S. districts often include legal counsel, compliance officers, and finance teams — evaluate whether the system can operate sustainably at full scale. The European Commission's

The European Commission's Digital Education Action Plan explicitly lists systemic integration and sustainability as criteria for digital adoption across EU member states. The U.S. Student Privacy Policy Office defines institutional responsibilities for student data handling that directly affect how pilot documentation is assessed during renewal.

What institutional review committees actually evaluate

The gap between pilot success and renewal approval tends to cluster around five dimensions:

- Governance durability — can the configuration ownership and approval workflows transfer to permanent institutional roles?

- Cross-campus compatibility — does the data model and identity management work across multiple sites with different administrative structures?

- Budget sustainability — can the cost structure survive the transition from innovation or grant funding to recurring operational expenditure?

- Regulatory exposure — are data retention, vendor classification, and lawful processing basis documented and auditable?

- Vendor dependency — what is the exit risk if the relationship changes after district-wide adoption?

A pilot that addresses all five before the first review cycle is in a structurally different position than one that addresses them during it.

How structural alignment differs across U.S. and European education systems

An education technology pilot strategy must explicitly map to the regulatory and procurement environment where the institution operates. The frameworks differ by region, but the institutional pressure is shared: demonstrating that the system is governable, compliant, and affordable beyond the pilot cohort.

Governance continuity and ownership transfer

Temporary pilot governance rarely survives expansion. Whoever manages configuration during the pilot — whether a project team, a vendor-side onboarding lead, or a school administrator — typically does not become the long-term owner. The question of who does must be answered before renewal, not during it.

Effective governance transfer documentation covers which institutional body approves system configuration changes, how role-based permissions escalate across campuses, and who holds vendor relationship accountability at scale.

For teams designing governance structures that will transfer cleanly to institutional ownership, the role-based access design for education systems covers permission architecture in multi-campus environments.

Funding transition from pilot budget to operational expenditure

Pilot funding in U.S. schools commonly comes from Title IV grants, E-Rate allocations, or state innovation programs. In European public institutions, pilots are often funded through EU digital education initiatives or regional ministry programs. Neither funding source was designed to carry a district-wide deployment indefinitely.

The transition to recurring operational expenditure needs to be modeled before expansion is proposed — not after the budget committee asks for it. This means projecting infrastructure growth, license scaling across user volume, compliance audit overhead, and the staff cost of system administration at institutional scale.

Without that model, scaling discussions become negotiation exercises rather than structured budget submissions.

If your team is already preparing for the transition from pilot to multi-year operational budget, a conversation with our engineers will clarify the cost and governance assumptions before they become line items. Discuss your situation.

How to align an education technology pilot strategy with long-term planning

The following five steps apply to both U.S. and EU contexts, though the specifics of Step 1 diverge significantly by region.

Step 1 — Define the regional regulatory context.

Document whether the deployment operates under FERPA and state-level privacy laws (U.S.), GDPR and national supervisory authority guidance (EU), or both. The European Data Protection Board publishes framework-level interpretation for public institutions, including lawful basis requirements and data minimization obligations that apply from the first cohort, not only at scale.

Step 2 — Model expansion boundaries explicitly.

Specify how the system scales: by campus, department, grade band, or municipality. Ambiguous scope creates procurement friction later, particularly in EU public institutions where significant scope changes may trigger formal re-tendering under public procurement directives.

Step 3 — Map governance escalation paths.

Define who assumes configuration ownership when pilot status ends and who holds authority to approve system changes at each stage of expansion. This is distinct from the pilot project governance and needs to be documented separately.

Step 4 — Project multi-year cost impact.

Estimate infrastructure growth, license volume scaling, support staffing, and compliance overhead across a three-to-five year horizon. This projection is what procurement committees and finance teams actually review — not the pilot's engagement metrics.

Step 5 — Validate integration durability.

Stress-test the integration architecture against institutional-scale loads before proposing expansion. The EdTech batch integration architecture covers throughput, data reconciliation, and error handling patterns relevant to multi-campus deployments.

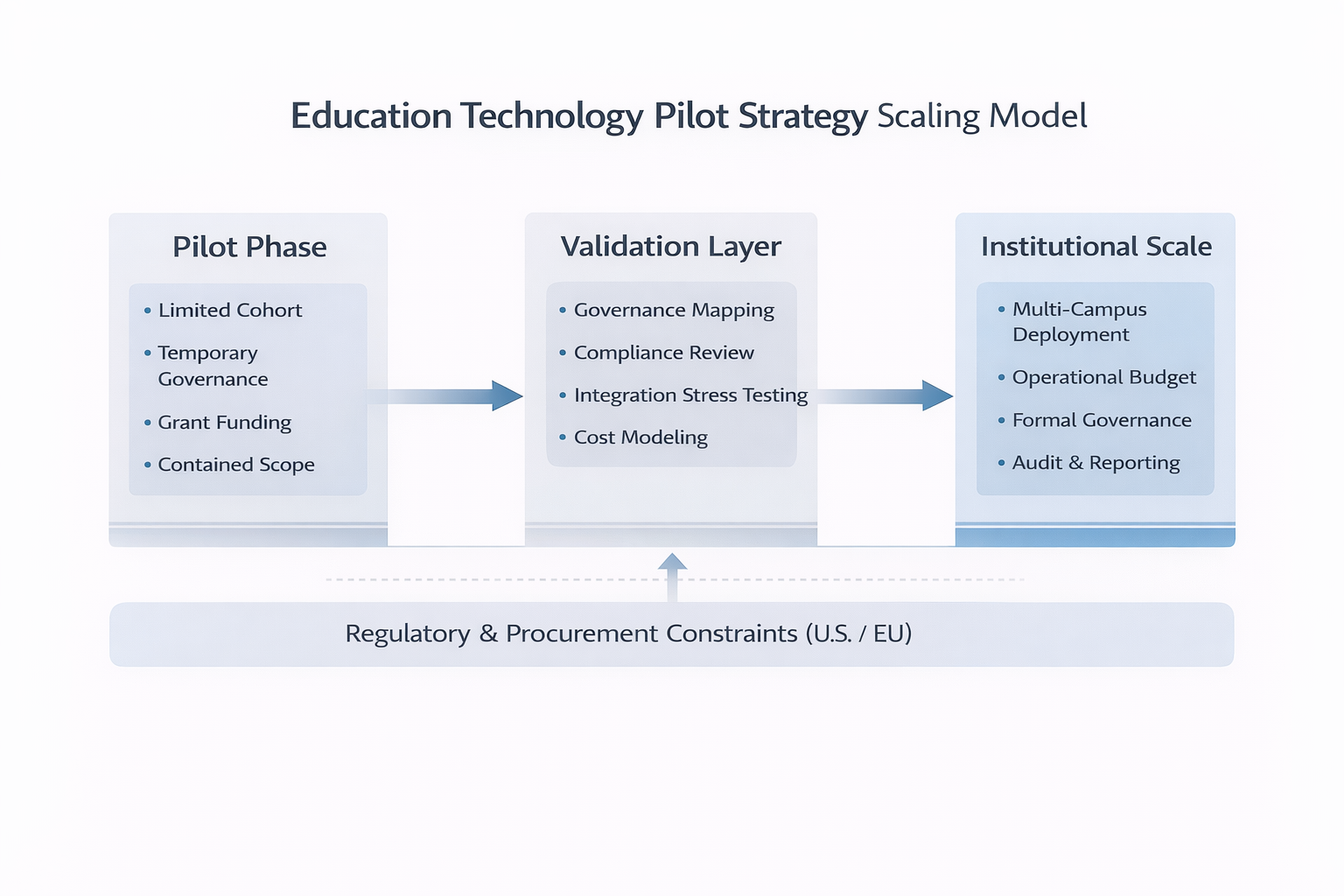

The diagram below maps how these five steps translate into a three-stage scaling model — from contained pilot conditions through the validation layer into full institutional deployment.

education-technology-pilot-strategy-scaling-model

The three-stage scaling model maps pilot-phase assumptions to the governance, compliance, and integration requirements that determine institutional approval.

Comparing pilot-only design with institutional-ready design

The table below shows how the same five dimensions differ depending on whether pilot design treated long-term alignment as a core requirement or a future concern.

Institutional-ready design does not increase technical complexity during the pilot. It clarifies the assumptions that will determine whether the renewal committee approves expansion.

Integration durability beyond initial cohorts

At pilot scale, integration loads are contained. A limited cohort accessing an LMS, SIS, and assessment platform through a single campus identity provider generates manageable throughput. At institutional scale, the same architecture handles concurrent access across hundreds of classrooms, multiple identity federation points, and district-wide reporting workflows.

The questions that need answers before expansion is approved:

Can ingestion pipelines handle district-wide concurrency without throughput degradation?

Will role-based access and permission escalation work across campuses with different administrative structures?

Are audit logs retained for the timeframes required under FERPA or GDPR?

Is the reporting layer structured for board-level dashboards and compliance submissions?

Integration durability is a renewal criterion, not an implementation detail. Piloting an EdTech platform without simulating institutional-scale integration loads creates a category of risk that becomes visible only after the expansion decision is made — when it is significantly more expensive to resolve.

For teams building integration architecture that needs to carry institutional weight, proof-of-concept and pilot development services include integration durability validation as part of the engagement scope.

Regulatory and procurement constraints by region

U.S. context: FERPA, state laws, and district procurement

In U.S. K–12 environments, pilots involving student data operate under FERPA's student record protection requirements, applicable state-level privacy statutes, and district-level procurement policies. Renewal decisions frequently involve legal review of vendor classification, data ownership terms, and retention policies.

A pilot that does not document lawful data handling from the outset creates a compliance gap that surfaces during renewal — not during deployment, when it would be easier to address. Multi-year contracts in U.S. districts commonly require board approval and cost transparency projections, making the funding transition modeling in Step 4 a structural prerequisite, not an optional analysis.

European context: GDPR and public procurement directives

In the EU, lawful basis for data processing must be documented before scaling — not retroactively. Data minimization and purpose limitation apply from the first deployment phase. National supervisory authorities enforce GDPR interpretation, which varies by member state even within the same framework.

Public procurement rules in many EU countries require formal re-tendering if deployment scope changes significantly after the pilot phase. This makes structural foresight during pilot design not just strategically useful but procedurally necessary.

For scoping a pilot in a way that preserves procurement flexibility during expansion reviews, the EdTech pilot containment strategy covers the boundaries and documentation approach that keep options open for formal expansion.

Key takeaways

- An education technology pilot strategy must document scaling assumptions, governance ownership, and compliance obligations before the first cohort goes live — not during renewal review.

- Institutional review committees evaluate governance durability, funding sustainability, and regulatory exposure — not classroom engagement metrics alone.

- U.S. and EU regulatory frameworks create different escalation paths: FERPA and state privacy laws in the U.S., GDPR and public procurement directives in Europe.

- The transition from grant-based pilot funding to operational expenditure must be financially modeled before expansion is proposed to a budget committee.

- Integration durability at institutional scale must be validated during the pilot phase — throughput assumptions from a limited-cohort deployment will not automatically transfer to district-wide loads.

Conclusion

A pilot that succeeds in the classroom can still fail the institution if it was designed to validate features rather than demonstrate operational viability. The governance structures, compliance documentation, and funding models that institutional review committees require are not afterthoughts — they are design criteria that either exist in the pilot architecture from the start or get assembled reactively under deadline pressure.

The difference between a pilot that converts to a district-wide contract and one that stalls is rarely technical. It comes down to whether the documentation exists, whether governance ownership is defined, and whether the cost model is ready when the procurement committee asks for it.

Bluepes works with EdTech vendors and educational institutions to assess structural alignment before renewal decisions and budget submissions. If you are preparing for expansion beyond pilot scope, review your pilot's institutional readiness before the next renewal cycle.

FAQ

Interesting For You

Role-based access control in education systems

Student data visibility is not a configuration choice — it is a governance decision with regulatory consequences. When role-based access control in education systems is designed without clear boundaries, what starts as a flexible pilot setup quickly becomes an audit liability. School districts and institutions reviewing vendor platforms now ask precise questions during procurement: who can see student records, under what permission rules, and what the logs confirm. The access model either answers those questions or it delays the deal. This article is for CTOs, VPs of Engineering, and IT directors building or deploying education platforms who need their permission models to hold up under institutional compliance review. Next — a practical framework for structuring roles, permission matrices, and logging in alignment with FERPA and COPPA requirements. Role-based access control (RBAC) in education systems is a structured permission framework that assigns data visibility and action rights according to instructional, administrative, and governance responsibilities, aligned with regulatory obligations. Getting this architecture right reduces audit exposure, simplifies vendor classification under FERPA, and prevents the informal permission drift that compounds across deployment phases. Teams working through education product development often find that access design is the first question institutional clients raise — before features, pricing, or SLAs.

Read article

EdTech batch integration architecture

Many education platforms operate without real-time APIs and rely on structured exports such as CSV files for data exchange. Reliable learning logic in these environments requires deterministic batch processing, normalization rules, and clearly defined synchronization windows. System behavior must account for delayed updates, reconciliation logic, and audit traceability. This article explains how to design integrations for K–12 and higher education systems when near-real-time connectivity is unavailable. It is relevant for integration architects, district IT teams, and vendors working with legacy or closed educational platforms.

Read article

Why Most EdTech Pilots Fail: Designing a Contained Learning Path Pilot in Regulated Education

A contained learning path pilot is a structured, limited-scope validation phase designed to test instructional logic, sequencing rules, and mastery criteria without building a full production system. In K–5, K–12, and higher education environments, pilot containment reduces instructional and technical risk while preserving architectural clarity for future scaling. Overextending early pilots often increases adoption friction and governance complexity. This article explains how to define pilot scope, isolate variables, and align validation metrics with long-term system strategy. It is relevant for school leaders, EdTech founders, curriculum architects, and technology directors evaluating instructional pilots.

Read article